Menu

A long time ago I settling into a new strategic role within DataScience following the best part of a decade as consultant/programmer then General Manager of the Decision Support Software business. Part of that new role involved getting parachuted in to spend time with key clients who wanted a more intellectual conversation than the responsible account executive was willing or able to provide. In terms of corporate survival it is a useful reputation to acquire but I had to put up with some gentle ribbing as a result. At one sales conference I was satirised (affectionally I think) as the thinking woman’s thinking person. You have to be of a certain age and British to get that reference. One of those clients was John Taylor, then Chief Executive of the Dental Practice Board. We got on from the day we realised we were the only two people in the room who knew that Issac Newton had considered his alchemy as, if not more, important than his scientific work. Worst still we were the only two who even cared about it, or thought it was significant. It was for John that I created the first version of the known-unknown-unknowable model that was an early predecessor of Cynefin. In a modified form, and possibly a parallel invention, the nomenclature became notorious and I’ve only recently started to use and develop it.

A long time ago I settling into a new strategic role within DataScience following the best part of a decade as consultant/programmer then General Manager of the Decision Support Software business. Part of that new role involved getting parachuted in to spend time with key clients who wanted a more intellectual conversation than the responsible account executive was willing or able to provide. In terms of corporate survival it is a useful reputation to acquire but I had to put up with some gentle ribbing as a result. At one sales conference I was satirised (affectionally I think) as the thinking woman’s thinking person. You have to be of a certain age and British to get that reference. One of those clients was John Taylor, then Chief Executive of the Dental Practice Board. We got on from the day we realised we were the only two people in the room who knew that Issac Newton had considered his alchemy as, if not more, important than his scientific work. Worst still we were the only two who even cared about it, or thought it was significant. It was for John that I created the first version of the known-unknown-unknowable model that was an early predecessor of Cynefin. In a modified form, and possibly a parallel invention, the nomenclature became notorious and I’ve only recently started to use and develop it.

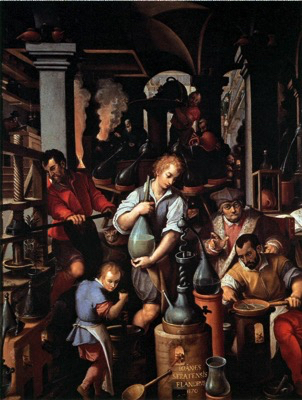

So when I was searching for an image for this follow up to yesterday’s post that conversation came back to me. In consequence I chose An Alchemist’s Laboratory by Jan_van_der_Strae given that the word pseudo-science first came into use in the Eighteenth Century to describe Alchemy. As a word it comes from the Greek root pseudo meaning false and the Latin sciential, meaning knowledge. So literally it means false knowledge and is thus a pejorative epithet. To apply it to something that uses true science but does not make any wider claim is a misnomer at best.

Now I know Simon was not being malicious in using the word there is a clear need to make the distinction between something that is based in science and can be validated against its sources and something that claims to be a science. Hopefully that is clearer.

Readers may want to skip what follows. I’ve written it up for the record given a link Simon made to a polemic that I am surprised he took seriously. But given he made the link and thereby gave it some credibility I decided to provide a response.

I suspect Simon just did a web search and found Tom’s post but didn’t investigate it further. If he had he would have discovered that Tom was once an enthusiastic advocate of Cynefin, one of a group of over enthusiastic advocates that have always made me slightly nervous. Tom decided at one point that I didn’t understand Chaos while he, as a self nominated wizard did. So he produced a new version of Cynefin. I politely pointed out that he was welcome to create his own framework and acknowledge his sources but not to usurp the Cynefin brand. I had started with Boisot’s I-Space and said as much in my response to Tom. Either way he became heated and said that I didn’t understand chaos as my only solution was to get the hell out of it. I then pointed to several references where I had clearly stated that this was the strategy if you accidentally fell into it, but the deliberate entry was a desirable thing for the purpose of innovation and distributed decision support. So his assertion was wrong and here was the evidence in peer reviewed articles. That didn’t go down well and my comment with said references was deleted. My protest at this, along with the comment that he was using chaos where I used complex, resulted in a tirade in which he explicitly stated that he hadn’t read any of the articles I referenced and didn’t intent to as doing so made him physically sick. At that point I realised he had a problem and might be better left alone although I haven’t been able to resist the odd poke from time to time. I probably shouldn’t have pointed out that Tom has always self-published and never submitted his material to peer review. But given he was asserting a position of knowledge on what was or was not science it seemed a fair enough point.

I’ve happily ignored Tom’s argument that Cynefin is a pseudo-science over the years and Tom deleted any comment I made on his posts. But as Simon has draw attention to his views I thought I would run through the pseudo-science criteria that Tom uses by way of expanding on what is or is not pseudo-science in respect of Cynefin. So here goes, with the criteria in italics.

Everything I’ve said above can be verified so I’m surprised Simon gave Tom’s rantings credibility. But I respect Simon so I’ve responded as promised.

Cognitive Edge Ltd. & Cognitive Edge Pte. trading as The Cynefin Company and The Cynefin Centre.

© COPYRIGHT 2024

I landed in Melbourne after two long flights but with the satisfaction of an email ...

I’ll never forget the first time I saw the murmuration of starlings. I’d arrived at ...